2025 saw a dramatic change in the fortunes of AI chip makers, with Nvidia in particular benefitting. The growth in AI by companies such as OpenAI catapulted Nvidia into the top slot as the world’s most valuable company, while others fell by the wayside.

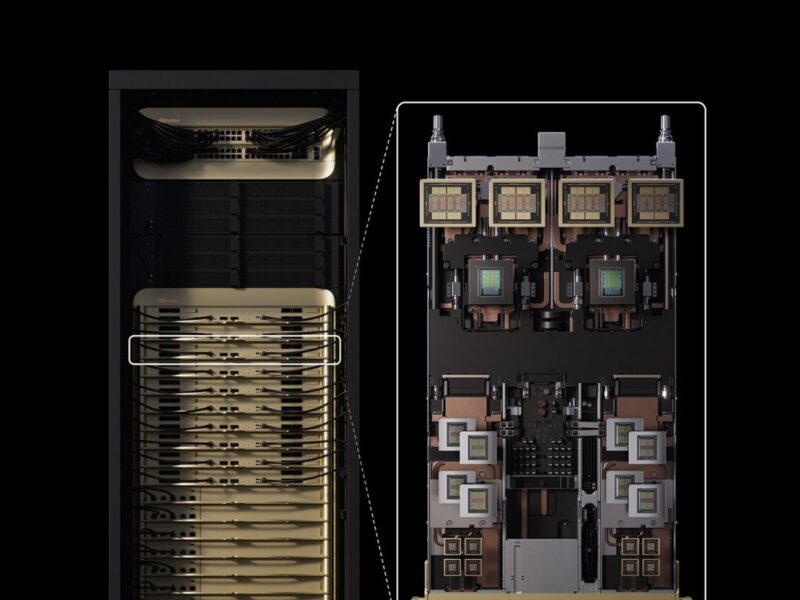

At CES in January Nvidia announced its desktop AI machine, then called Digits. This uses the GB10 chip with the Grace processor and Blackwell graphics processing unit (GPU) and 128GB of unified memory to run models with up to 200bn parameters. This evolved through the year to become the DGX Spark, and the first version of this was delivered to Elon Musk, CEO of Tesla and SpaceX, in September.

Other early recipients of DGX Spark included Anaconda, Cadence Design Systems, ComfyUI Docker, Google, Hugging Face, JetBrains, LM Studio, Meta, Microsoft, Ollama and Roboflow who are validating and optimizing their tools, software and models for the desktop unit. The 240W design is also being made by Acer, ASUS, Dell Technologies, GIGABYTE, HP, Lenovo and MSI.

The successor to Blackwell, called Rubin CPX, is also emerging to power the next generation of AI factories. Announced in September, the chip is scheduled to be available by the end of 2026. Combined with the Vera CPU and 100TBytes of high bandwidth memory (HBM4), Rubin CPX will provide 8 exaflops of AI performance in a single rack in the datacentre, 7.5x more performance than the GB300 NVL72 systems that are shipping today.

“The Vera Rubin platform will mark another leap in the frontier of AI computing — introducing both the next-generation Rubin GPU and a new category of processors called CPX,” said Jensen Huang, founder and CEO of NVIDIA. “Just as RTX revolutionized graphics and physical AI, Rubin CPX is the first CUDA GPU purpose-built for massive-context AI, where models reason across millions of tokens of knowledge at once.”

Rubin CPX delivers up to 30 petaflops of 4bit compute with 128GB of cost-efficient GDDR7 memory. It also integrates video decoders and encoders, as well as long-context inference processing, in a single chip for higher performance in long-format applications such as video search and high-quality generative video.

AMD has been running fast to catch up in AI. The Instinct MI350 and MI355 GPUs aim to tap into AMD’s CPUs in the datacentre, providing a 4x boost in AI training and up to 35x in inferencing performance. These are pushing the edge of the power envelope at 1000W and 1400W respectively.

However, it was not all positive. Industry analysts highlight the risks of the interconnected nature of AI, and that many AI chip ventures will fail going forward.

The industry saw RISC-V AI chip developer Esperanto Technologies close, while the assets of Untether AI were acquired by AMD, MIPS was acquired by Global Foundries in August and Kinara by NXP Semiconductors.

Robotics

Humanoid robotics was one of the emerging sectors for AI, with Samsung starting the year accelerating its developments. Nvidia was again at the forefront with its Jetson GPUs, models and open source tools for training these robots.

But there have been other innovations. The MAVERIC chip for instance built at the University of California, Berkeley, uses a 16nm process with four RISC-V processor cores and 13 AI accelerator cores. These can provide AI vision analysis at 72 frames/s for robotics applications.

China has been driving humanoid robotics technology, starting with car makers but also with a view to military applications.

Fourier Intelligence in Shanghai has developed its second generation GR-2 bipedal robot with 53 degrees of freedom, and lays claim to the first commercial humanoid robot being used by car makers. Chinese car manufacturers can also pre-order humanoid prototypes from UBtech in Shenzhen, and the company says it has received 500 orders to date.

Shanghai Kepler Robotics is working with 50 target customers on real-world scenarios for its K2 humanoid robot in intelligent manufacturing, warehousing and logistics, and the company says the K2 has ‘nearly’ mastered the ability to autonomously complete specific tasks.

Engine AI in Shenzen has also launched its PM01 robot, based on the Jetson Orin compute modules from Nvidia. These humanoid robots have been tested in car factories through 2025 and the expectation is that it will take two to five years for the technology to migrate from industrial applications to the consumer market.

1X in the US is aiming to push this along faster, launching the world’s first consumer-ready humanoid robot in October. NEO automates everyday chores says the company, using Nvidia’s Jetson Thor chip which shipped in August and is also being used for AI to provide Level 4 autonomy in driverless vehicles, from cars to trucks.

“Humanoids were long a thing of sci-fi… then they were a thing of research, but today — with the launch of NEO — humanoid robots become a product. Something that you and me can reach out and touch. NEO closes the gap between our imaginations and the world we live in, to the point where we can actually ask a humanoid robot for help, and help is granted,” said Bernt Børnich, CEO and Founder of 1X.

Edge AI

One of the interesting emerging chip suppliers was DeepX. The Korean company is looking at a Raspberry Pi card for its DX-M1 edge AI accelerator chip that sampled early in the year. It is also planning a 2nm version, the M2, with the same 5W power envelope.

It is up against Israeli company Hailo, which launched its 10H edge AI processor designed to run generative AI models directly on devices without relying on cloud infrastructure. The new chip implements transformer algorithms to provide native support for large language models (LLMs) and vision-language models (VLMs) to edge applications. The company also signed a deal with Avnet ASIC to access the latest technologies at foundry TSMC.

BrainChip has also raised $25m to accelerate development and commercialization of its neuromorphic edge AI technology. The funding will scale up production of its AKD1500 edge AI chip aimed at sensors, medical devices, and wearables, where low BOM cost and minimal power budgets are critical. The chip supports on-device large language models using its TENNs architecture, enabling real-time and private GenAI without sending data to the cloud. The Akida 2 platform adds low-power on-device learning, targeting applications that need adaptive intelligence at the edge rather than fixed inference.

NXP is also using its $307m acquisition of transformer edge AI chip designer Kinara to create an AI ecosystem in the automotive industry similar to that Nvidia’s CUDA.

The initial target for the Kinara Ara-2 generative AI chip is in the vehicle cabin for driver monitoring or infotainment copilots to query a manual or monitor the cabin and respond to conditions, says Rutger Vrijen, senior vice president and head of strategy for the connected edge at NXP.

“There’s tremendous opportunities to help Kinara scale in combination with the broader portfolio. But the longer term vision is to make it really easy for our customers to adopt this across all our devices,” he said, “We are not at CUDA level yet but the software and toolchain first strategy is something that will enable us to do that. Kinara is not just a provider of silicon but focussed on making the silicon usable.”

NXP has already integrated the toolchain and model zoo into its eIQ tool along with NXP Linux and FreeRTOS distributions.

Nvidia finished the year with a valuation of US$4.4trillion, topping Apple at $4.0tn, Alphabet/Google at $3.6tn and Microsoft at $3.6tn

This has given Nvidia a significant advantage. This allows the company to make investments in the AI companies that buy its chips, including OpenAI, xAI and CoreWeave, as well as telecoms giant Nokia, and gives it a strong currency for deals. One of those key deals was an investment in Intel, developing a custom CPU to sit alongside the Ruben GPU and potentially acquiring parts of the company.

The strength of the AI market is a boon to Nvidia in particular, and it has been taking advantage of that strength. With Jetson Thor shipping now, and Rubin CPX shipping in 2026, the company is ramping up the performance boost across a range of AI markets.

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News