US startup enCharge has launched its analog in-memory compute AI chip for low power inference at the desk and at the edge.

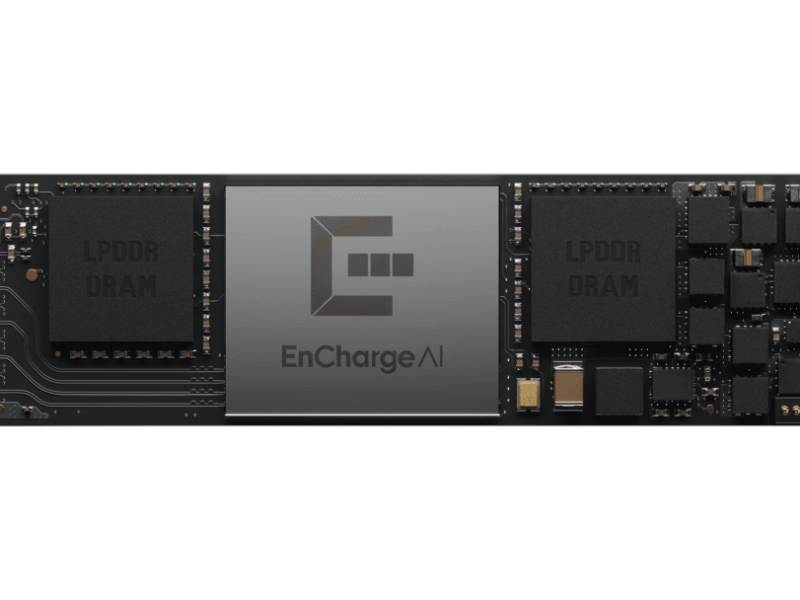

The EN100 provides up to 200TOPS of AI inference by using an array of capacitors formed form the metal wires to store weights in the chip, avoiding the need to waste power with off chip data transfers. It also includes custom RISC-V processor cores.

The chip is provided on a full sized PCI Express card with four chips for workstations and an M.2 PCIe card for laptops and embedded and edge applications with 32BG of low power LPDDR5 memory and a power consumption of 8.25W.

With 1petaOPS of performance, the full sized PCIe card has 128GB of LPDDR5 memory and a bandwidth of 272Gb/s as well as a power consumption of around 40W.

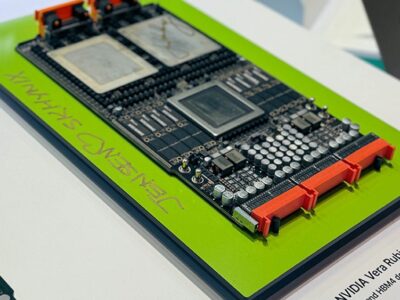

This will take on the Nvidia DGK Spark standalone desktop unit based on the GB10 Blackwell GPU alongside a Grace CPU with 20 ARM cores in the same package, connected to 128GB of LPDDR5 memory. This provides a similar 1000 TOPS performance with a 273GB/s bandwidth. However Nvidia has not yet detailed the power consumption of the DGX Spark but reports put this at 170W, although this includes a 4TB solid state disk drive.

All of this highlights the drive to more local AI inference at the desktop for developers to test out multimodal models that need more performance.

“AI is moving out of the data centre to scale up, moving into laptops and desktops, edge servers. These are often battery powered or space constrained, and that’s where we have found a lot of traction,” said Naveen Verma, CEO of Encharge AI and professor of electrical and computer engineering at Princeton University where the technology was developed.

“EN100 represents a fundamental shift in AI computing architecture, rooted in hardware and software innovations that have been de-risked through fundamental research spanning multiple generations of silicon development,” said Verma. ” This means advanced, secure, and personalized AI can run locally, without relying on cloud infrastructure. We hope this will radically expand what you can do with AI.”

A customised software suite in needed for the in-memory compute chip, and this combines specialized optimization tools, high-performance compilation, and extensive development resources—all supporting popular frameworks like PyTorch and TensorFlow.

Compared to competing solutions, EN100 demonstrates up to ~20x better performance per watt across various AI workloads. With up to 128GB of high-density LPDDR memory and bandwidth reaching 272 GB/s, EN100 efficiently handles sophisticated AI tasks, such as generative language models and real-time computer vision, that typically require specialized data center hardware. The programmability of EN100 ensures optimized performance of AI models today and the ability to adapt for the AI models of tomorrow.

“The real magic of EN100 is that it makes transformative efficiency for AI inference easily accessible to our partners, which can be used to help them achieve their ambitious AI roadmaps,” says Ram Rangarajan, Senior Vice President of Product and Strategy at EnCharge AI. “For client platforms, EN100 can bring sophisticated AI capabilities on device, enabling a new generation of intelligent applications that are not only faster and more responsive but also more secure and personalized.”

Early adoption partners have already begun working closely with EnCharge to map out how to use the in-memory compute approach for both CNN and transformer AI models as well as always-on multimodal AI agents and enhanced gaming applications that render realistic environments in real-time.

The first round of EN100’s Early Access Program is currently full, but EnCharge says interested developers and OEMs can sign up for the upcoming Round 2 Early Access Programme at env1.enchargeai.com.

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News