Running GenAI models, particularly large language models (LLMs), on devices like laptops and smartphones poses significant challenges

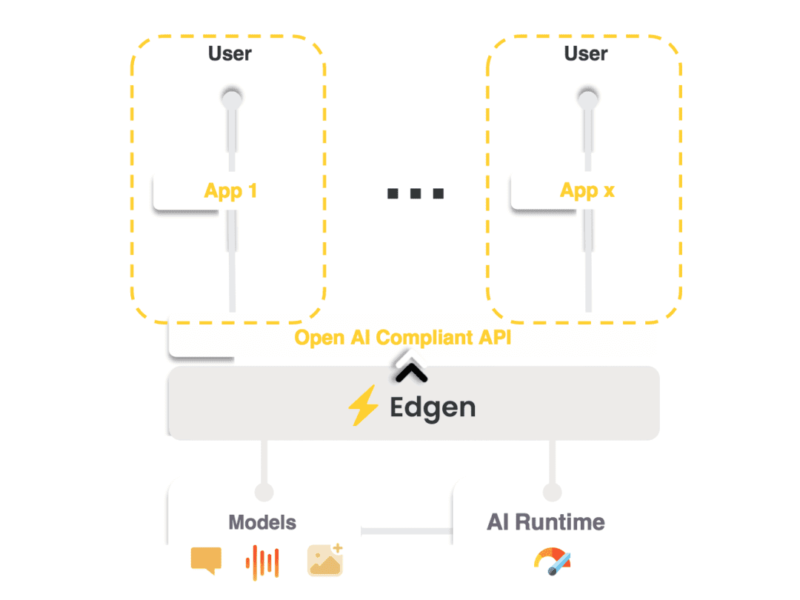

These models demand considerable memory and computational power, traditionally available only in cloud-based environments. Edgen addresses these challenges by providing a local-API server that simplifies edge-based GenAI deployment. Leveraging Edgen is similar to using a OpenAI’s API, but with the added benefits of on-device processing.

Advantages of On-device Inference

On-device inference brings several advantages when compared to cloud inference:

- Free: It runs locally on hardware the user already owns.

- Optimized: Edgen uses the latest techniques and runtimes to optimize the inference of GenAI models.

- Scalable: More and more users? No need to increment cloud computing infrastructure. Just let your users use their own hardware.

- No internet required: With on-device inference users don’t need an internet connection.

- Data Private: On-device inference means users’ data never leave their devices.

Discover Edgen: Open Source with a Permissive License

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News