IBM uses phase-change memory for machine learning

The ability to process data within memory is an idea gaining ground as it removes the memory-to-processor bottleneck that limits conventional von Neumann architectures and also promises to reduce power consumption by reducing data movements. The approach is also a closer analog to the way brains perform.

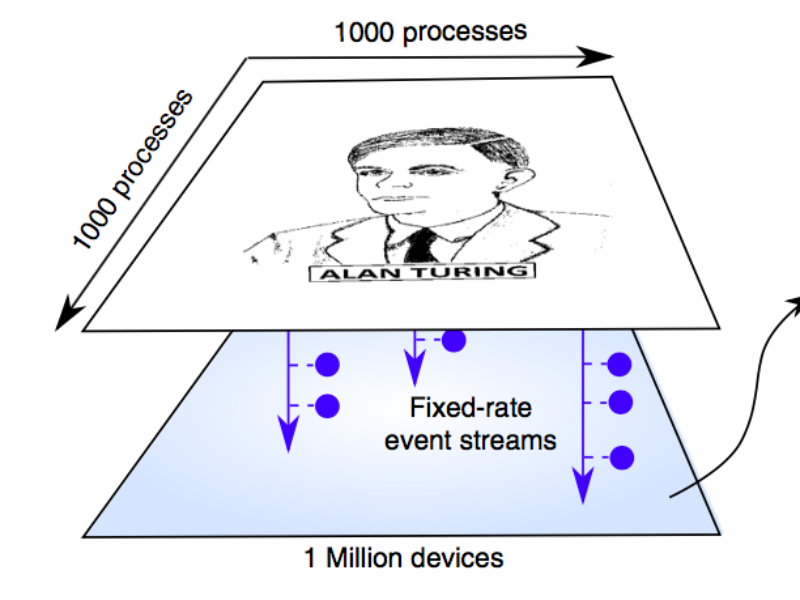

The machine learning was run on a 1Mbit phase-change memory and used to learn a 1,000 by 1,000 pixel image of Alan Turing and also to find temporal correlations in two data streams of rainfall information.

The learning approaches were compared to conventional machine-learning approaches run on von Neumann computer and achieved similar results. However, because of the advantages of locality processing-in-memory is expected to produce improvements in both speed and energy efficiency of a factor of 200.

The IBM Zurich team used the crystallization dynamics and conductance of the PCM to perform the computations. The PCM devices were based on doped Ge2Sb2Te2 (GST) chalcogenide material integrated in 90nm CMOS. The bottom electrode has a radius of approximately 20nm and the phase change material is approximately 100 nm thick and extends to the top electrode.

“Memory has so far been viewed as a place where we merely store information. But in this work, we conclusively show how we can exploit the physics of these memory devices to also perform a rather high-level computational primitive. The result of the computation is also stored in the memory devices, and in this sense the concept is loosely inspired by how the brain computes,” said Abu Sebastian of IBM Research and lead author of the paper. Sebastian also leads a European Research Council funded project on this topic.

IBM scientists are due to present another application of in-memory computing at the International Electron Devices Meeting in December 2017.

Related links and articles:

News articles:

Western Digital backs processor-in-memory startup

Machine learning chip startup raises $9 million

Startup plans to embed processors in DRAM

Graphcore’s ‘Colossus’ chip due before end of year

Google’s second TPU processor comes out

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News