Intel launches second generation neuromorphic chip on 4nm

At 31mm2, Loihi 2 is half the size of its 14nm predecessor but still has a similar number of transitors at 2.3bn. Importantly, it adds high speed Ethernet with 10 times faster processing, up to 15 times greater resource density with up to 1 million neurons per chip, and improved energy efficiency by using an early version of the Intel 4 4nm process technology

“Loihi 2 and Lava harvest insights from several years of collaborative research using Loihi. Our second-generation chip greatly improves the speed, programmability, and capacity of neuromorphic processing, broadening its usages in power and latency constrained intelligent computing applications. We are open sourcing Lava to address the need for software convergence, benchmarking, and cross-platform collaboration in the field, and to accelerate our progress toward commercial viability,” said Mike Davies, director of Intel’s Neuromorphic Computing Lab.

- Intel launches self-learning processor

- Intel lifts a corner of the covers on its Loihi chip

- Intel 64-chip neuromorphic system now available for research

The pre-production version of the Intel 4 process uses extreme ultraviolet (EUV) lithography to simplify the layout design rules compared to past process technologies.

Loihi originally supported only binary-valued spike messages and Loihi 2 now permits spikes to carry integer-valued payloads with little extra cost in either performance or energy. These generalized spike messages support event-based messaging, preserving the desirable sparse and time-coded communication properties of spiking neural networks (SNNs), while also providing greater numerical precision.

The Loihi 2 chip consists of six microprocessor cores, up from 3, and 128 fully asynchronous neuron cores connected by a network-on-chip (NoC). The neuron cores are optimized for neuromorphic workloads, each implementing a group of spiking neurons, including all synapses connecting to the neurons. All communication between neuron cores is in the form of spike messages. The number of embedded microprocessor cores has doubled from three in Loihi to six in Loihi 2. Microprocessor cores are optimized for spike-based communication and execute standard C code to assist with data I/O as well as network configuration, management, and monitoring. Parallel I/O interfaces extend the on-chip mesh across multiple chips—up to 16,384—with direct pin-to-pin wiring between neighbors.

Loihi 2 offers more standard chip interfaces than Loihi. The asynchronous chip-to-chip signaling bandwidth is 4 times larger, with a destination spike broadcast feature that reduces inter-chip bandwidth utilization by 10x or more in common networks, and three-dimensional mesh network topologies with six scalability ports per chip.

Supported interfaces include 1000BASE-KX, 2500BASE-KX and 10GBase[1]KR Ethernet, GPIO, and both synchronous (SPI) and asynchronous (AER) handshaking protocols. A spike I/O module at the edge of the chip provides configurable hardware accelerated expansion and encoding of input data into spike messages, reducing the bandwidth required from the external interface and improving performance while reducing load on the embedded processors

This allows Loihi 2 to support a new deep neural network (DNN) implementation known as the Sigma-Delta Neural Network (SDNN) that provides great gains in speed and efficiency compared to the rate-coded spiking neural network approach commonly used on Loihi. SDNNs compute graded activation values in the same way that conventional DNNs do, but they only communicate significant changes as they happen in a sparse, event-driven manner. Simulation characterizations show that SDNNs on Loihi 2 can improve on Loihi’s rate-coded SNNs for DNN inference workloads by over 10x in both inference speeds and energy efficiency.

Rather than optimising for a specific SNN model, Loihi 2 now implements its neuron models with a programmable pipeline in each neuromorphic core to support common arithmetic, comparison, and program control flow instructions. This programmability greatly expands its range of neuron models using the Lava software framework.

This is an open, modular, and extensible framework, Lava will allow researchers and application developers to build on each other’s progress and converge on a common set of tools, methods, and libraries. Lava runs seamlessly on heterogeneous architectures across conventional and neuromorphic processors, enabling cross-platform execution and interoperability with a variety of artificial intelligence, neuromorphic and robotics frameworks. Developers can begin building neuromorphic applications without access to specialized neuromorphic hardware and can contribute to the Lava code base, including porting it to run on other platforms.

“Investigators at Los Alamos National Laboratory have been using the Loihi neuromorphic platform to investigate the trade-offs between quantum and neuromorphic computing, as well as implementing learning processes on-chip,” said Dr. Gerd J. Kunde, staff scientist, Los Alamos National Laboratory. “This research has shown some exciting equivalences between spiking neural networks and quantum annealing approaches for solving hard optimization problems. We have also demonstrated that the backpropagation algorithm, a foundational building block for training neural networks and previously believed not to be implementable on neuromorphic architectures, can be realized efficiently on Loihi. Our team is excited to continue this research with the second generation Loihi 2 chip.”

Next: Loihi 2 neuromorphic system availability

However the chips are only available over the Neuromorphic Research Cloud, where teams engaged in the Intel NRC have access to shared systems.

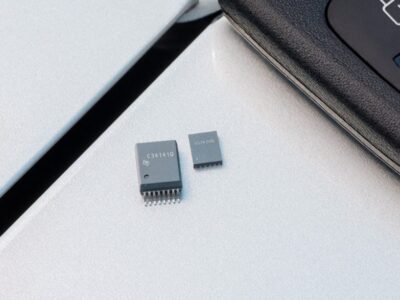

Oheo Gulch is a single-chip system for lab testing with a single Loihi 2 chip instrumented for characterization and debug. An Intel Arria 10 FPGA interfaces to Loihi 2 and provides remote access over Ethernet.

Intel is working on Kapoho Point, a stackable 8-chip system in a 4x4in form factor with an Ethernet interface. This system is amed at portable projects and exposes general-purpose input/output (GPIO) pins and standard synchronous and asynchronous interfaces for integration with sensors and actuators for embedded edge and robotics applications. Kapoho Point boards can be stacked to create larger systems in multiples of eight chips. Kapoho Point will be available for remote access in the Neuromorphic Research Cloud and on loan to Intel NRC research teams.

Other articles on eeNews Europe

- Rigetti completes UK quantum computer

- Error correction boost for photonic quantum computer

- Top articles in September on eeNews Europe

- GM looks to buy chips directly as shortages bite

- EU sets up Apple showdown over USB-C chargers

- Analysis shows coming over capacity for product types

- Chip market warning of overcapacity in 2023

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News