Melexis teams for AI-enabled 3D Time of Flight demonstrator

Belgian sensor maker Melexis is working with emotion3D on a 3D Time-of-Flight (ToF) demonstrator using machine learning.

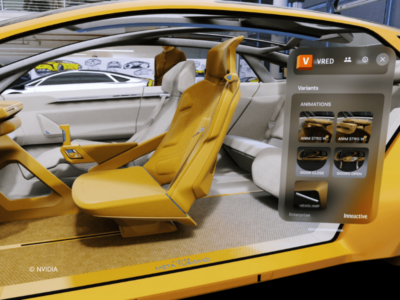

The solution combines an automotive driver monitoring system (DMS) with high-precision 3D driver localization to dynamically align augmented reality head-up displays (AR HUD) objects.

Melexis and emotion3D’s DMS covers all basic functions such as driver drowsiness and attention warning to conform to the EU’s General Safety Regulation and Euro NCAP’s new testing protocols. In addition, the demonstrator provides 3D locations of the driver’s facial landmarks. These are relevant for an optimal augmented reality head-up display (AR HUD) user experience. The objects projected by the HUD require precise alignment with real-world objects, following the dynamic position changes of the driver.

“We combine accurate and robust 3D eye position detection for HUD with sunlight invariant eye gaze and eye openness detection for leaner DMS algorithm implementations,” said Gualtiero Bagnuoli, Product Marketing Manager at Melexis. “Use of ToF technology is key. It is very easy to get accurate depth data from the 3D ToF sensor with low processing effort. The result is that the DMS and HUD algorithms work impressively well with wide-field of view lenses and VGA resolution ToF sensors.”

The demonstrator consists of a camera built around Melexis’ MLX75027 3D ToF sensor and emotion3D’s in-cabin analysis software (E3D ICMS). The software can be flexibly integrated in any automotive SoC of choice.

“Regulatory requirements make it necessary to integrate DMS into new vehicles and augmented reality head-up displays become more and more popular. Our combined system offers a highly precise and cost-efficient solution for automotive manufacturers,” said Florian Seitner, CEO of emotion3D.

www.melexis.com; www.emotion3d.ai

Related articles

- Melexis upgades ToF image sensor for QVGA

- Melexis CEO: Strong alone

- Melexis announces Dresden R&D centre

Other articles on eeNews Europe

- Top ten tech trends for 2022

- Anglia Unicorn created to support technology start-ups

- High stakes in the chip shortage

- Satellite to test lunar satnav

- RISC-V teams for open hardware diversity alliance

- Galway in line for Intel megafab

- European Chips Act for semiconductor sovereignty

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News