ResNet-50 – a misleading machine learning inference benchmark for megapixel images

Over the last six months, there has been a rapid influx of new inference chip announcements. As each new chip has been launched, the only indicator of performance given by the vendor has usually been TOPS. Unfortunately TOPS doesn’t measure throughput for a given model, which is really the only way to measure an inference chip’s performance. In contrast, TOPS simply provides the potential peak performance (TOPS = trillions of operations per seconds = the number of MACs in the chip times the frequency of MAC operations x 2).

As a result, when a benchmark is given by an inference chip vendor, there is typically just one and it is almost always ResNet-50, usually omitting batch size! ResNet-50 is a classification benchmark that uses images of 224 pixels x 224 pixels, and performance is typically measured with INT8 operation. However, ResNet-50 is a very misleading benchmark for megapixel images because all models that process megapixel images use memory very differently than the tiny model used in ResNet-50’s 224×224. This article will explain why and highlight the more accurate way to benchmark megapixel images used in inference.

Batch Size Matters

When ResNet-50 throughput is quoted, it is very common that batch size is not mentioned, even though batch size has a huge effect on throughput! For example, for the Nvidia Tesla T4, ResNet-50 throughput at batch = 1 is 25% of what it is at batch = 32. This represents a 4x difference. When batch size is not specified, customers can assume that it was measured at a large batch size that maximized throughput.

Even more interesting is that no customer actually uses ResNet-50 in any real world applications. Customers have image sensors that give megapixel images and they want to do real time object detection and recognition on them using YOLOv2 or YOLOv3. Larger images give higher prediction accuracy, and more challenging models give higher prediction accuracy.

Next: Applications

In applications where it is time critical to detect objects quickly, doing large batches increases latency and increases the time to detect objects. Batch=1 is the preferable mode for applications where object detection and recognition is time critical and large images are preferable for applications where safety is critical. Also, as we’ll see below, batching does not necessarily improve performance as image sizes increase.

Why do larger batches increase throughput for ResNet-50 and other models with small images?

ResNet-50 has the following:

- Input images of 224 by 224 pixels by 3 bytes (RGB) = about 0.05Mbytes

- Weights of 22.7Mbytes

All inference accelerators have some number of MB (megabytes) of on-chip SRAM. If total storage requirements exceed the on-chip SRAM, everything else must be stored in DRAM.

To better explain the tradeoffs between different amounts of on-chip SRAM, below are some examples of three different inference chips.

- Chip A: 8Mbytes

- Chip B: 64Mbytes

- Chip C: 256Mbytes

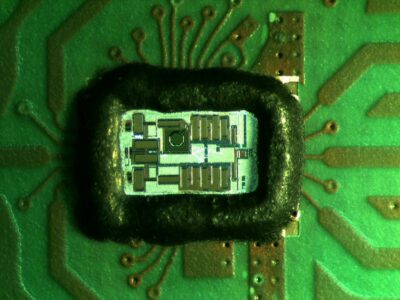

For example, in TSMC’s 16nm process, 1Mbyte of SRAM occupies about 1.1 square millimeters of die area, which would make Chip C very big and expensive.

The 3 things that need to be stored by inference accelerators are:

- Weights

- Intermediate activations (the outputs of each layer: ResNet-50 has about 100)

- Code to control the accelerator (we’ll ignore this in the rest of this discussion because almost nobody has disclosed this information)

Performance will be highest if everything fits in SRAM. If not, what to keep in SRAM and what to keep in DRAM to maximize throughput will have something to do with the nature of the model (relative sizes of weights and intermediate activations) and of the inference chip architecture (what is it good at and weak at).

In models such as ResNet-50 and most CNNs, the activations output from Layer N are the input to Layer N+1 and are not needed again.

In ResNet-50, the largest activation is 0.8MB. Typically, one of the layers before or after will have half the activation size. Thus, if there is 1.2MB for temporary activation storage, that means no activations need to be written to DRAM: this is for batch=1. If all activations had to be written to DRAM, it would mean almost 10MB of activations written out and 10MB read back in. This means that 1.2MB of on-chip storage for temporary activations avoids 20MB of DRAM bandwidth per frame. This is almost as much as the 22.7MB of weights.

Next: Weights

Keeping weights on chip only helps if there is room to keep all 22.7MB on chip. In the case of Chip A and Chip B, there is not enough room to hold everything. Only Chip C can hold the weights and the intermediate activations. However, it takes a long time to read in the 22.7MB of weights.

The idea of batching is actually quite simple. If performance is limited by reading in weights, then you need to process more images each time the weights are available to improve throughput. With a batch size of 10, you can read in the weights once for every 10 images, thus spreading the weight-loading slowdown across more workload. At 1.2MB of on-chip SRAM needed per image, just 12MB of SRAM for temporary activations allows batch=10 without using DRAM bandwidth for activations.

In Chip B, there is room to hold enough temporary activations to store all of them in on-chip SRAM, so batch=10 will definitely accelerate performance. In Chip A, the smaller on-chip activations can be stored on-chip and batch=10 will help, but not as much because larger activations will require some DRAM bandwidth.

For many architectures and SRAM capacities, loading weights is the performance limiter. This is why larger batch sizes make sense for higher throughput (although they increase latency). This is true for ResNet-50 and many “simple” models because all of them use small image sizes.

Next: So large batch sizes always give higher throughput?

Most people assume that every model will have higher throughput at larger batch sizes.

However, this assumption is based on the common benchmarks most chips quote today, which is ResNet-50, GoogleNet, and MobileNet. It is important to note that the thing all of them have in common is image sizes that are not real-world: 224×224 or 299×299 or 408×408 even. All of these are smaller than even a VGA, a very old computer display standard, which is 640×480. Typical 2 megapixel image sensors are >40 times larger than 224×224.

As image size grows, the weight size doesn’t change. However, the size of intermediate activations grow proportionately with the larger input image size. The largest activation of any layer for ResNet-50 is 0.8MB, but when ResNet-50 is modified for 2 megapixel images, the largest activation grows to 33.5MB. Just for batch=1, on-chip SRAM storage for activations needs to be 33.5MB for the largest activation plus ½ that for the leading or following activation = 50MB. This itself is a large amount of on-chip SRAM and larger than the weights at 22.7MB. It might result in higher performance in this case to keep the weights on-chip and store at least larger activations in DRAM.

If you consider large batch sizes such as batch=10 for ResNet-50 at 2 Megapixel, there would need to be 500MB of on-chip SRAM to store all the temporary activations. Even the largest Chip C does not have enough SRAM for a large batch size. The increase in size in activations at megapixel images for ALL models is why large batch sizes don’t get higher throughput for megapixel images.

Next: Conclusion

All models that process megapixel images will use memory very differently than tiny models like ResNet-50’s 224×224. The ratio of weights correspond to activation flips for large images. To really get a sense for how well an inference accelerator will perform for any CNN with megapixel images, a vendor needs to look at a benchmark that uses megapixel images. The clear industry trend is to larger models and larger images so YOLOv3 is more representative of the future of inference acceleration. Using on-chip memory effectively will be critical for low cost/low power inference.

Geoff Tate is the CEO of Flex Logix Technologies Inc. (Mountain View, Calif.), a licensor of FPGA fabric and neural network acceleration cores. Prior to his current position Tate was a co-founder and CEO of Rambus Inc.

Related links and articles:

News articles:

Flex revamps NN fabric, announces edge AI processor

Eta adds spiking neural network support to MCU

Microsoft, Alexa, Bosch join Intel by investing in Syntiant

NovuMind benchmarks tensor processor

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News