Nvidia has launched a chiplet programme that allows third party processors or accelerator to be used in its AI stacks. However the deal highlights the challenges of staying neutral in the legal battle between ARM and Qualcomm.

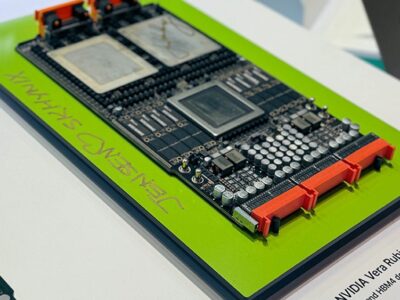

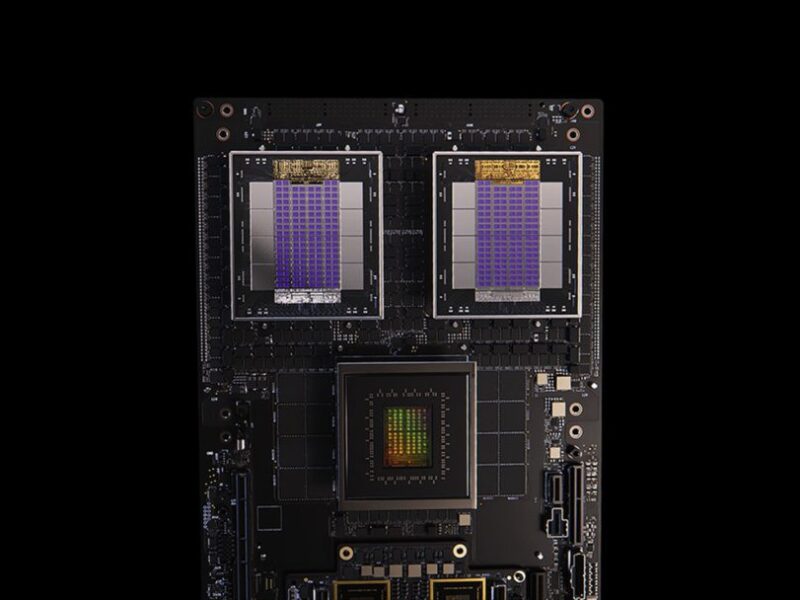

The NVLink Fusion programme allows third party CPUs such as the Nuvia CPU from Qualcomm or the 2nm Monaka 144-core CPU from Fujitsu to be used with the Nvidia Blackwell GPUs. The Nvidia Grace CPU can also to be used with third party custom AI accelerators developed by AIchip.

“NVLInk Fusion is opening up the platform for custom AI compute and rack designs,” said Dion Harris, senior director of HPC and AI factories at Nvidia. “This can be a custom CPU + Blackwell or Grace + custom AI compute.”

“Combining Fujitsu’s advanced CPU technology with Nvidia’s full-stack AI infrastructure delivers new levels of performance,” said Vivek Mahajan, CTO at Fujitsu. “Directly connecting our technologies to Nvidia’s architecture marks a monumental step forward in our vision to drive the evolution of AI through world-leading computing technology, paving the way for a new class of scalable, sovereign and sustainable AI systems.”

However this opens up another front in the battle between Qualcomm and ARM. Qualcomm’s custom datacentre chips, acquired with startup Nuvia, have been at the centre of a battle over the licensing for custom CPU cores. This weekend, the CEOs of both ARM and Qualcomm were in Saudi Arabia.

The country has ordered 18,000 GB300 Grace Blackwell chips for a single Nvidia supercomputer for a Saudi state-owned AI company called Humain, with “several hundred thousand” more chips planned for the country over the next five years.

Humain and Qualcomm Technologies will also develop datacentre CPUs and AI as well as using the Snapdragon and Dragonwing processors, and NVLink Fusion is expected to be a key part of that development.

“Qualcomm Technologies’ advanced custom CPU technology with NVIDIA’s full-stack AI platform brings powerful, efficient intelligence to datacentre infrastructure,” said Cristiano Amon, president and CEO of Qualcomm Technologies. “With the ability to connect our custom processors to Nvidia’s rack-scale architecture, we’re advancing our vision of high-performance, energy-efficient computing to the data centre.”

“A tectonic shift is underway: for the first time in decades, datacentres must be fundamentally rearchitected — AI is being fused into every computing platform,” said Jensen Huang, founder and CEO of Nvidia launching the programme at Computex in Taiwan today. “NVLink Fusion opens our AI platform and rich ecosystem for partners to build specialized AI infrastructures.”

This will use a chiplet version of the NVLink interconnect chip, announced back in 2022, to provide scalable data links for millions of GPUs in an AI factory using any ASIC, the rack-scale systems and the end-to-end networking platform with either Ethernet or Infiniband.

“We are working with a number of IP providers such as Synopsys and Cadence to allow customers to build the NVlink fusion integration into their custom designs,” said Harris. “There are two key configurations, and the first to integrate via C2C on the custom CPU to our GPU. The other configuration allows an IO chip that has taped out to integrate to NVLink.”

As well as Fujitsu and Qualcomm, partners for the programme include interconnect chip designers Astera Labs and Marvell as well as Mediatek and AIchip. EDA and IP support comes from Cadence and Synopsys for adding the NVLink chiplets to other CPUs or GPUs.

Marvell is providing expertise in system and semiconductor design as well as IP, including electrical and optical serializer/deserializers (SerDes), die-to-die interconnects for 2D and 3D devices, advanced packaging, silicon photonics, co-packaged copper, custom high-bandwidth memory (HBM), system-on-chip (SoC) fabrics, optical IO, and compute fabric interfaces such as PCIe 7.0.

Nvidia has also launched a new, single chip version of its Grace CPU that can be used in the Fusion programme. The previous Grace superchip had two die, so the C1 will be more suited to server boards that use the AMD GPU. The C1 is being used by server maker such as Jabil, Foxconn, Supermicro and Quanta.

“Alchip is supporting adoption of NVLink Fusion by broadening its availability through a design and manufacturing ecosystem, encompassing advanced processes and proven packaging and supported by the ASIC industry’s most flexible engagement,” said Johnny Shen, CEO of Alchip. “It’s our contribution to ensuring that the next generation of AI models can be trained and deployed efficiently to meet the demands of tomorrow’s intelligent applications.”

“HPC and AI workload demands are unique and evolving rapidly, and hyperscalers architecting the most advanced custom AI systems rely on Cadence to deliver enabling technology from data centres to the edge,” said Boyd Phelps, senior vice president and general manager of the Silicon Solutions Group at Cadence. The company earlier this month launched its own supercomputer for EDA acceleration based on Nvidia GPUs.

“Our comprehensive IP portfolio, including design IP, chiplet infrastructure, subsystems and other critical IP, complements the NVLink ecosystem, accelerating the delivery of AI factories that are powerful, energy-efficient and production-ready at scale.”

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News