AMD details GPU for exascale computing

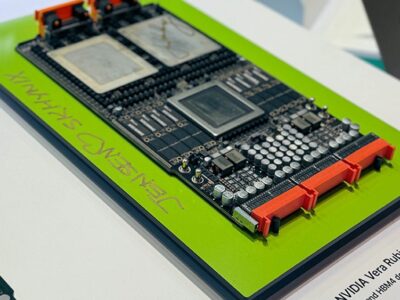

AMD has launched a graphics processor optimised for high performance computing (HPC) rather than graphics.

The Instinct MI100 accelerator marks the divergence of the two types of GPU, delivering 11.5 teraflops of 64bit floating point operations from an array of 120 compute units and 7680 streaming processors in a 300W power envelope for supercomputers and data centres.

“Each era has unique characteristics for compute,” said Brad McCready, VP for data centre GPU accelerators at AMD. “We have moved from CPUs carrying the weight of the computation as we needed a boost to keep performance moving forward using general purpose GPUS. We believe we need another boost to move into the exascale era. AI is driving new workloads, again diversifying the workloads that GPUs are carrying. This is the first GPU to break the 10 TFLOP barrier,” he said.

The 120 compute units are arranged in four arrays. These are derived from the earlier GCN architecture and execute flowes of data, or wavefronts, that contain 64 work items. AMD has added a Matrix Core Engine to the compute units that are optimized for operating on matrix datatypes, from 8bit integer to 64bit floating point, boosting the throughput and power efficiency.

The classic GCN compute cores contain a variety of pipelines optimized for scalar and vector instructions. In particular, each CU contains a scalar register file, a scalar execution unit, and a scalar data cache to handle instructions that are shared across the wavefront, such as common control logic or address calculations. Similarly, the CUs also contain four large vector register files, four vector execution units that are optimized for FP32, and a vector data cache. Generally, the vector pipelines are 16-wide and each 64-wide wavefront is executed over four cycles.

The Matrrix engine adds a new family of wavefront-level instructions, the Matrix Fused MultiplyAdd or MFMA. The MFMA family performs mixed-precision arithmetic and operates on KxN matrices using four different types of input data: 8-bit integers (INT8), 16-bit half-precision FP (FP16), 16-bit brain FP (bf16), and 32-bit single-precision (FP32). All MFMA instructions produce either 32-bit integer (INT32) or FP32 output, which reduces the likelihood of overflowing during the final accumulation stages of a matrix multiplication.

“The Matrix Core moves into hardware the matrix operations for supercomputing workloads – that provides a 7x performance improvement,” said McCready.

The chips use the latest PCI Express connections to servers and also bring out AMD’s on-die Infinity fabric to allow up to four GPU accelerator cards can be connected together in a topological cube.

”Four chips can be connected using our Infinity architecture with coherency rather than PCIe Gen4 for a fully connected cube. We physically implement it with a bridge card that goes across the top of the rack with 576Gb/s,” he said. This provides up to 340 GB/s of aggregate bandwidth per card.

Memory bandwidth is also important. “We also get 20 percent memory improvement using HBM2,” said McCready. The accelerator cards support 32GBytes of HBM2 memory at a clock rate of 1.2 GHz and delivers 1.23 TB/s of memory bandwidth to support large data sets and help eliminate bottlenecks in moving data in and out of memory.

The chip is being used by Dell, Gigabyte, HPE and Supermicro for cards alongside AMD’s EPYC Gen2 processor.

“Customers use HPE Apollo systems for purpose-built capabilities and performance to tackle a range of complex, data-intensive workloads across high-performance computing (HPC), deep learning and analytics,” said Bill Mannel, vice president and general manager, HPC at HPE. “With the introduction of the new HPE Apollo 6500 Gen10 Plus system, we are further advancing our portfolio to improve workload performance by supporting the new AMD Instinct MI100 accelerator, which enables greater connectivity and data processing, alongside the 2nd Gen AMD EPYC™ processor. We look forward to continuing our collaboration with AMD to expand our offerings with its latest CPUs and accelerators.”

“Dell EMC PowerEdge servers will support the new AMD Instinct MI100, which will enable faster insights from data. This would help our customers achieve more robust and efficient HPC and AI results rapidly,” said Ravi Pendekanti, senior vice president, PowerEdge Servers, Dell Technologies. “AMD has been a valued partner in our support for advancing innovation in the data center. The high-performance capabilities of AMD Instinct accelerators are a natural fit for our PowerEdge server AI & HPC portfolio.”

The technology has already been tested by HPC developers at the Oak Ridge National Laboratory in the US.

“We’ve received early access to the MI100 accelerator, and the preliminary results are very encouraging. We’ve typically seen significant performance boosts, up to 2-3x compared to other GPUs,” said Bronson Messer, director of science, Oak Ridge Leadership Computing Facility. “What’s also important to recognize is the impact software has on performance. The fact that the ROCm open software platform and HIP developer tool are open source and work on a variety of platforms, it is something that we have been absolutely almost obsessed with since we fielded the very first hybrid CPU/GPU system.”

Related articles

- IBM, AMD TEAM ON CONFIDENTIAL COMPUTING FOR AI

- EUROPEAN EXASCALE SUPERCOMPUTER CHIP PROJECT UPDATES ITS ROADMAP

- NUVIA RAISES $240m TO BUILD ARM DATA CENTRE CHIP

- GRAPHCORE LAUNCHES 7NM AI PROCESSOR

- ‘UNIVERSAL’ PROCESSOR STARTUP GAINS SUPERCOMPUTER DESIGN WIN

Other articles on eeNews Europe

- Synopsys buys Moortec to take on Mentor

- Samsung uses Xilinx FPGA for first adaptable computational storage drives

- TSMC confirms 5nm fab in US

- Peugeot, Altran team for driverless car testing

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News