Spanish RISC-V IP developer Semidynamics has benchmarked the performance of its Tensor Unit running a LlaMA-2 7B-parameter Large Language Model (LLM) on an ‘all in one’ RISC-V AI IP core .

Semidynamics has run the full LlaMA-2 7B-parameter model (BF16 weights) on its All-In-One element, using its ONNX Run Time Execution Provider, and calculated the utilization of the Tensor Unit for all the matrix multiplication layers in the model.

The benchmarking demonstrates the combination of the tensor unit with the Gazzillion streaming data management IP. This is key for LLM models, which use transformer networks that are memory bound. This shows utilization above 80% for most use cases, including sparce networks, or shapes, regardless of the size of the matrices, in sharp contrast with other architectures.

- Spanish startup performs RISC-V open core surgery

- First fully coherent RISC-V Tensor unit for AI chip design

- Semidynamics launches configurable RISC-V vector unit

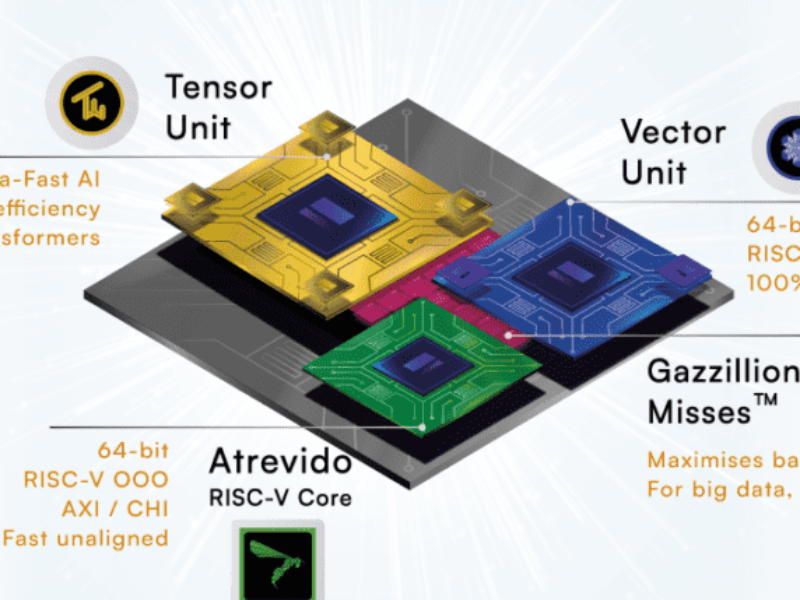

“The traditional AI design uses three separate computing elements: a CPU, a GPU (Graphical Processor Unit) and an NPU (Neural Processor Unit) connected through a bus. This traditional architecture requires DMA-intensive programming, which is error-prone, slow, and energy-hungry plus the challenge of having to integrate three different software stacks and architectures. In addition, NPUs are fixed-function hardware that cannot adapt to future AI algorithms yet-to-be-invented,” said Roger Espasa, CEO of Semidynamics.

“In contrast, Semidynamics has re-invented AI architecture and integrates the three elements into a single, scalable processing element. We combine a RISC-V core, a Tensor Unit that handles matrix multiplication (playing the role of the NPU) and a Vector Unit that handles activation-like computations (playing the role of the GPU) into a fully integrated, all-in-one compute element. Our new architecture is DMA-free, uses a single software stack based on ONNX and RISC-V and offers direct, zero-latency connectivity between the three elements. The result is higher performance, lower power, better area and a much easier-to-program environment, lowering overall development costs.

“Because the Tensor and Vector Units are under the direct control of a flexible CPU, we can deploy any existing or future AI algorithm, providing great protection to our customer’s investments,” he said.

The self-attention layers used in LLMs use five matrix multiplications (MatMul), a matrix Transpose and a SoftMax activation function. The Tensor Unit (TU) takes care of matrix multiplication, whereas the Vector Unit (VU) can efficiently handle Transpose and SoftMax. Since the Tensor and Vector Units share the vector registers, expensive memory copies can be largely avoided eliminating latency and energy use in transferring data from the MatMul layers to the activation layers and vice versa.

To keep the TU and the VU continuously busy, weights and inputs must be efficiently fetched from memory into the vector registers. The Gazzillion Misses technology supports a large number of in-flight cache misses so that data can be fetched ahead of time to provide high resource utilization. A custom tensor extension also includes new vector instructions optimized for fetching and transposing 2D tiles, greatly improving tensor processing.

“Our new All-In-One AI IP not only delivers outstanding AI performance but is also so much easier to program as there is now just one software stack instead of three. Developers can use the RISC-V stack they already know and they do not have to worry about software-managed local SRAMs, or DMAs,” said Espasa.

Semidynamics provides an ONNX runtime optimized for the All-In-One AI IP, which allows programmers to easily run ML models. The company is at the RISC-V Summit Europe in Munich this week.

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News