From implantable retinal pixels to visual cortex stimulation

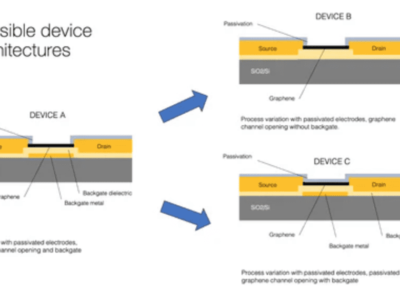

Created late 2011, the company has successfully carried out several clinical trials with its Pixium Vision implants IRIS I (with approximately 50 electrodes) and in February this year, it realized its first retinal implant of an improved version, IRIS II featuring 150 electrodes. IRIS is only the implantable part to be affixed outside the retina. It consists of tiny electrodes on the tip of a flexible circuit, an infrared photodetector cell, and a small ASIC in charge of multiplexing and mapping the IR signals received by the photodetector to the relevant electrodes. The electric signals then stimulate the ganglion cells whose terminations form directly the optic nerve fibres, sending the perceived signal to the brain.

of the flexible foil, controlled by the encapsulated ASIC.

A wearable part, in the shape of goggles equipped with a proprietary event-based camera, processes the visual information in front of the wearer and fires the encoded information to the IRIS through the eye. The ASIC is powered wirelessly through two inductive coils (one is in the goggle’s casing).

IRIS was developed for patients with Retinitis Pigmentosa (RP), a genetic disease that affects about 1/4000 of the population and turns the patients blind by their forties. The surgery procedure takes about 2.5 to 3 hours, and patients can start seeing patterns and train their brains to make sense of that newfound vision within a couple of weeks after their operated eye has recovered from the operation, seeing hugely simplified black and white sceneries. Training procedures include identifying shapes, localizing light blocks on a screen, and in some cases, a complete software remapping of the signals to the electrodes may be necessary to cater for less receptive areas of the retina.

“Why only 150 electrodes?”, I candidly asked Khalid Ishaque, Pixium Vision’s CEO during an interview at their impressive R&D centre and headquarters in central Paris next to Institut de la Vision and Hôpital Quinze-Vingts.

“The electrodes are not the limiting factor here, but it is very complex to route all the signals from the ASIC to the electrodes on such a narrow flexible foil tip. We could not make the flexible foil much larger as it would make surgery more challenging, a larger slit in the eye would be riskier for sealing the scleral opening”, Ishaque explained. Indeed, the ASIC and powering coil are hosted outside the eye ball.

“We already have ASIC design to manage over a thousand electrodes, but further shrinking the wires on that flexible foil is a challenge”, he conceded, “but we can advance with IRIS to over a thousand electrodes within the next two to three years”.

The company is expecting a CE mark for commercialization in Europe within the next few months.

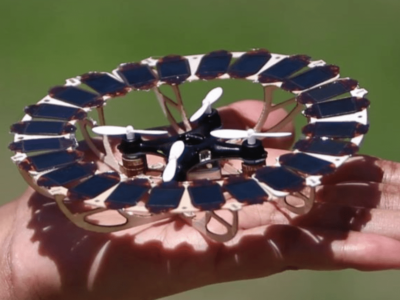

Now, taking the same visual inputs from its head-mount ATIS sensor, the company is concurrently developing PRIMA, in effect tiny modular arrays of wireless photovoltaic cells to be implanted just under the retina, and whose electrodes are placed in close proximity with the eye’s bipolar cells. Potentially, the surgery to implant PRIMA would take less than an hour and would be much less risky too since the device would be contained within the eye, with no other control circuitry, wires or cables.

Here, there is no more guess work nor any need for a multiplexing ASIC, explained the CEO, the photovoltaic cells receive the input as an Near Infra Red (NIR) beam from the head-mounted goggles and fire directly their electrical signal from their surface electrodes into the bipolar cells of the retina.

“In Age-related Macular Degeneration (AMD), these cells are still functional” Ishaque noted, “so by stimulating these cells, we keep most of the natural signal chain up to the optic nerve, this should make it easier for the brain to figure out what the visual stimulus corresponds to, and we would have much less pre-processing or encoding to do, also arguably faster learning”.

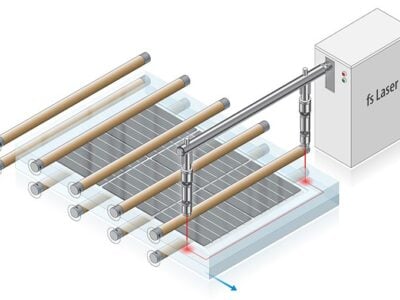

AMD is a much more prevalent condition, with over 4 million cases across Europe and the US, but patients are also typically older, usually over 70. That is why PRIMA with its low-risk surgical intervention could advantageously replace IRIS. PRIMA is designed as hexagonally-shaped clusters of photovoltaic cells (2 or 3 in series for one central electrode). The company has designed them at various sizes, ranging from 140µm to 70µm.

“The average diameter of a bipolar cell is about 10µm. The scale of the downsizing in PRIMA and much higher pixel concentration together with local returns enable finer stimulation, each pixel would stimulate fewer cells, compared with currently used larger electrode arrays for stimulating the ganglion cells. The signal codification will be different and is expected also to take advantage of more physiological processing and network mediation. The invisible Near Infra-Red (NIR) light would directly “draw” the visual data from our sensor onto the centre of the retina covered with these modular photovoltaic cells, and the brain will interpret these new artificial signals”, said Ishaque.

The technology for PRIMA was first developed by Daniel Palanker and his group at the Stanford University School of Medicine, and they are already working on 40µm clusters.

If proven successful PRIMA could address a much larger Age-Related Macular Degeneration (AMD) market and hopefully at a much more attractive price too, each wafer yielding thousands of implants. Having published a number of pre-clinical safety studies, Pixium Vision hopes to initiate its first human feasibility study by the end of this year, followed by a larger pivotal trial starting in 2017.

“With potential of several thousand electrodes, we are looking at higher resolution visual acuity to a degree of face-recognition”, commented Ishaque. “With AMD, patients lose their central vision, we aim to give them artificial central vision”.

Eventually, PRIMA could even supersede IRIS completely. Talking about stereoscopic vision, Ishaque sees this as a natural progression. “Once technology is stable, we would equip the goggles with two sensors and address both eyes at the same time”.

But the startup does not want to stop there.

“What about glaucoma where the optic nerve inhibits retinal signals to the brain?, how about neuropathies or traumatic brain injuries?, or conditions where the eye is missing altogether?” asked Ishaque before providing a futuristic answer.

“In our global scientific and medical network, we have ophthalmic surgeons but also neuro surgeons interfacing the eye and the brain – from photons to neurons. The next step would be to stimulate directly the surface of the visual cortex in the brain with vision signals. EU research framework and Defense Advanced Research Projects Agency (DARPA) in US for example are very interested in this” said Pixium Vision’s CEO.

“Having hardware and software is not enough, you have to also understand wetware and how the visual cortex receives and processes the signals. In ongoing research with partners at Institut de la Vision, CEA and Stanford, visual pathways from retina to the visual cortex are being mapped, identifying what sort of visual signals activate what regions of the visual cortex. So eventually, we would send the pre-processed data from our vision sensor, safely and selectively, directly to the visual cortex” he continued.

of conventional cameras: (right) continuous-time

acquisition of visual motion as performed by the

ATIS sensor

Key to succeeding with such an approach is the company’s bioinspired asynchronous visual sensor ATIS (Asynchronous Time-based Image Sensor). Its autonomous pixels are built to record transient events, rather than contribute to a static greyscale image like ordinary cameras.

Just like in the biological eye, the sensor’s pixel circuits encode transient information (light changes) into the precise timing of spikes while sustained information (light intensity) is encoded using a simple spike rate coding scheme. The continuous stream of spikes encodes the visual information in a language the brain can directly interpret, at the native temporal resolution of retinal ganglion cells (around 1ms) and at a dynamic range exceeding that of the human eye, writes the company on its website.

“Depending on research progress and of course funding, first human clinical trials could become feasible within the next 2 to 3 years. Of course, here you would have to implant electrodes to directly stimulate the visual cortex, and such interventions are more invasive and the visual cortex is much more complex. But DARPA is pursuing something similar with its recently announced Neural Engineering System Design (NESD) program” the CEO added.

DARPA has called for proposals to create a real-time implantable neural interface and could award up to US$60 million in funding to the right candidates for this four-year program.

“DARPA directly contacted specific international consortia, including Vision Institute and CEA. Their moon shot is to develop and validate a neural interface system capable of recording from 1 million neurons and stimulating more than 100 thousand neurons in the human sensory cortex” commented Ishaque.

Regarding pricing, the IRIS systems could cost around 90 – 100K euros, and the startup thinks it could break even after selling a few hundreds of them, subject also to reimbursements speed in socialized healthcare systems. This system could also be reimbursed as part of your private healthcare insurance, peanuts compared to the direct and indirect costs to healthcare system and society of supporting a blind person today. Ishaque cited an average cost of 40,000 euros per year that links back to society, to insurers.

Visit Pixium vision at www.pixium-vision.com

Related news:

Solar retinal implants may restore sight to the blind

Asynch image sensor boosts machine vision

Haptic lens converts light into touch

Haptic prosthesis gives back missing limb’s natural feel

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News